The State of MCP Security in 2026: What You Need to Know

A comprehensive look at the security landscape of the Model Context Protocol ecosystem - from tool poisoning attacks to emerging defenses.

The Model Context Protocol has gone from a niche integration standard to the backbone of agentic AI in barely a year. With 97 million monthly SDK downloads and over 8 million MCP server downloads across registries, MCP is now embedded in everything from IDE assistants to enterprise automation pipelines. But this rapid adoption has outpaced security tooling, and the consequences are starting to show.

This post surveys the current state of MCP security: what we know, what has gone wrong, and what the ecosystem is building to fix it.

The Attack Surface Is Larger Than You Think

MCP servers are essentially tool providers for AI agents. When an agent connects to an MCP server, it receives a set of tool definitions — names, descriptions, and input schemas — that it can invoke. The problem is that most agents trust these definitions implicitly. They have to: the whole point of MCP is dynamic tool discovery.

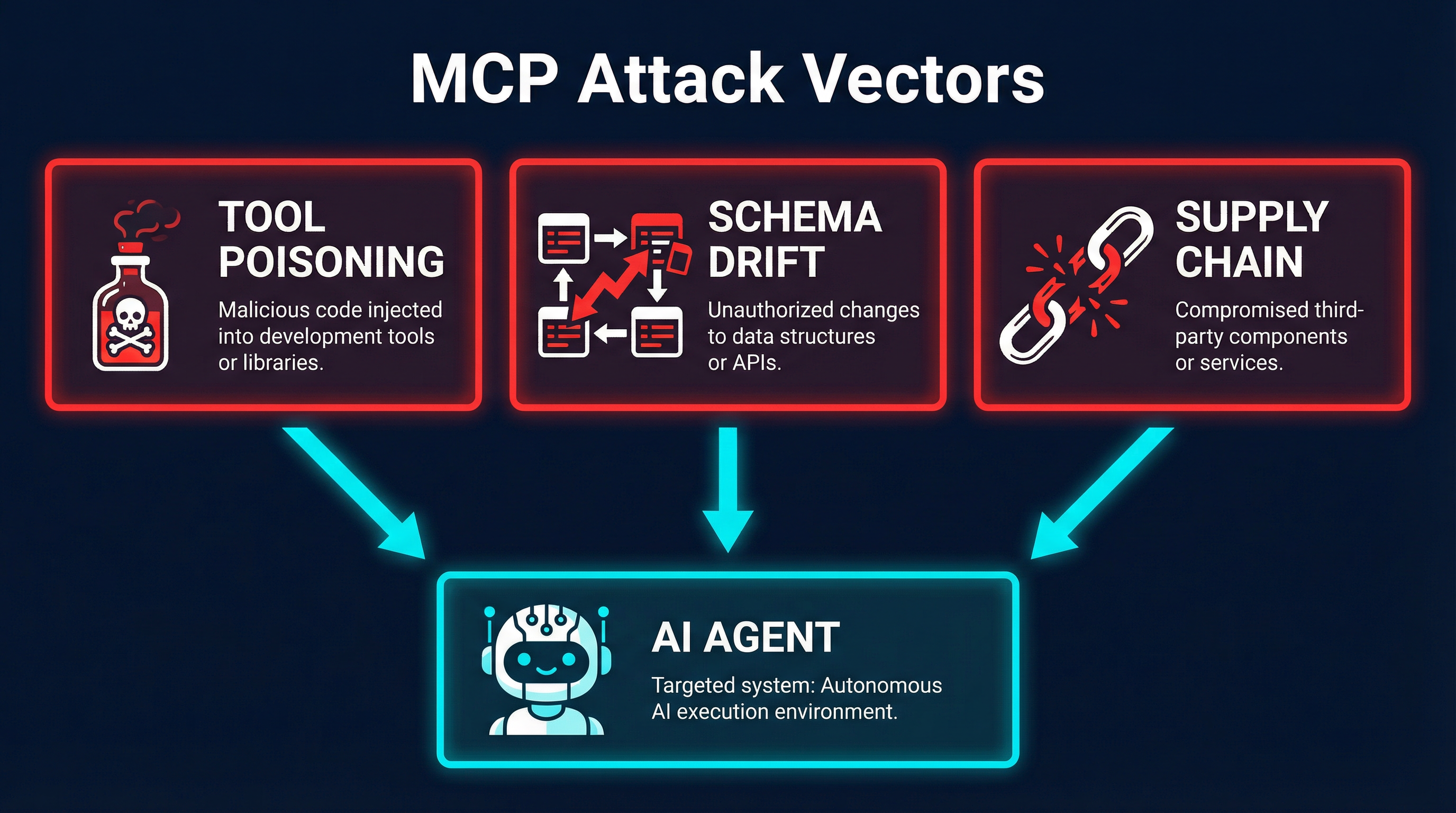

This creates three primary attack vectors:

Tool poisoning is the most studied. A malicious MCP server can craft tool descriptions that manipulate the agent’s behavior. Since LLMs use tool descriptions as part of their context, a poisoned description can instruct the agent to exfiltrate data, ignore safety constraints, or prefer the malicious tool over legitimate alternatives. Research from Invariant Labs demonstrated this in early 2025, and it remains a fundamental challenge.

Schema drift is subtler. An MCP server can change its tool schemas between registration and invocation. The tool that passed review yesterday might behave completely differently today. Without runtime schema validation, agents have no way to detect this.

Supply chain attacks exploit the registry ecosystem. With thousands of MCP servers available on npm, PyPI, and dedicated MCP registries, the classic software supply chain problems apply — typosquatting, dependency confusion, and compromised maintainer accounts — but now the payload runs inside an AI agent with elevated permissions.

The Numbers Tell the Story

The security research community has been busy quantifying these risks. Some key findings from the past year:

12% of audited agent skills were malicious. Grith.ai’s large-scale audit of MCP servers across public registries found that roughly one in eight contained behavior that qualified as malicious — from data exfiltration to credential harvesting. Their methodology involved both static analysis and runtime behavioral monitoring, making it one of the most comprehensive studies to date.

41% of MCP registry servers have no authentication. A survey of the major MCP registries found that nearly half of all listed servers accept unauthenticated connections. This means any agent that connects to these servers is trusting an endpoint that anyone on the network could be impersonating or modifying.

Context explosion is real. Google dropped MCP support from its Workspace CLI tooling after internal testing revealed that connecting to multiple MCP servers caused context windows to balloon to 40,000-100,000 tokens just from tool definitions alone. This is not just a performance issue — oversized contexts degrade reasoning quality and increase the surface area for prompt injection via tool descriptions.

Real-World Incidents

These are not theoretical risks. Several incidents in the past year demonstrated real-world impact:

The Clinejection attack (January 2026) was perhaps the most alarming. Researchers demonstrated a chain where a malicious GitHub issue title, crafted with specific injection payloads, could trigger a code assistant to execute npm install on a poisoned package. The attack compromised approximately 4,000 developer machines before detection. The chain exploited the trust boundary between the GitHub MCP server (which fetched issue data) and the terminal MCP server (which executed commands). No individual component was “wrong” — the vulnerability existed in the composition.

Registry poisoning on mcp.run led to a brief period where a popular database connector MCP server was replaced with a modified version that logged all query parameters to an external endpoint. The attack exploited a gap in the registry’s update verification process and was active for approximately 72 hours before community detection.

A credential harvesting campaign targeted developers using MCP-enabled IDE extensions. Fake MCP servers advertised as “enhanced GitHub Copilot tools” collected API keys and OAuth tokens from connected agent sessions. The servers functioned normally as development tools while silently exfiltrating credentials.

Emerging Defenses

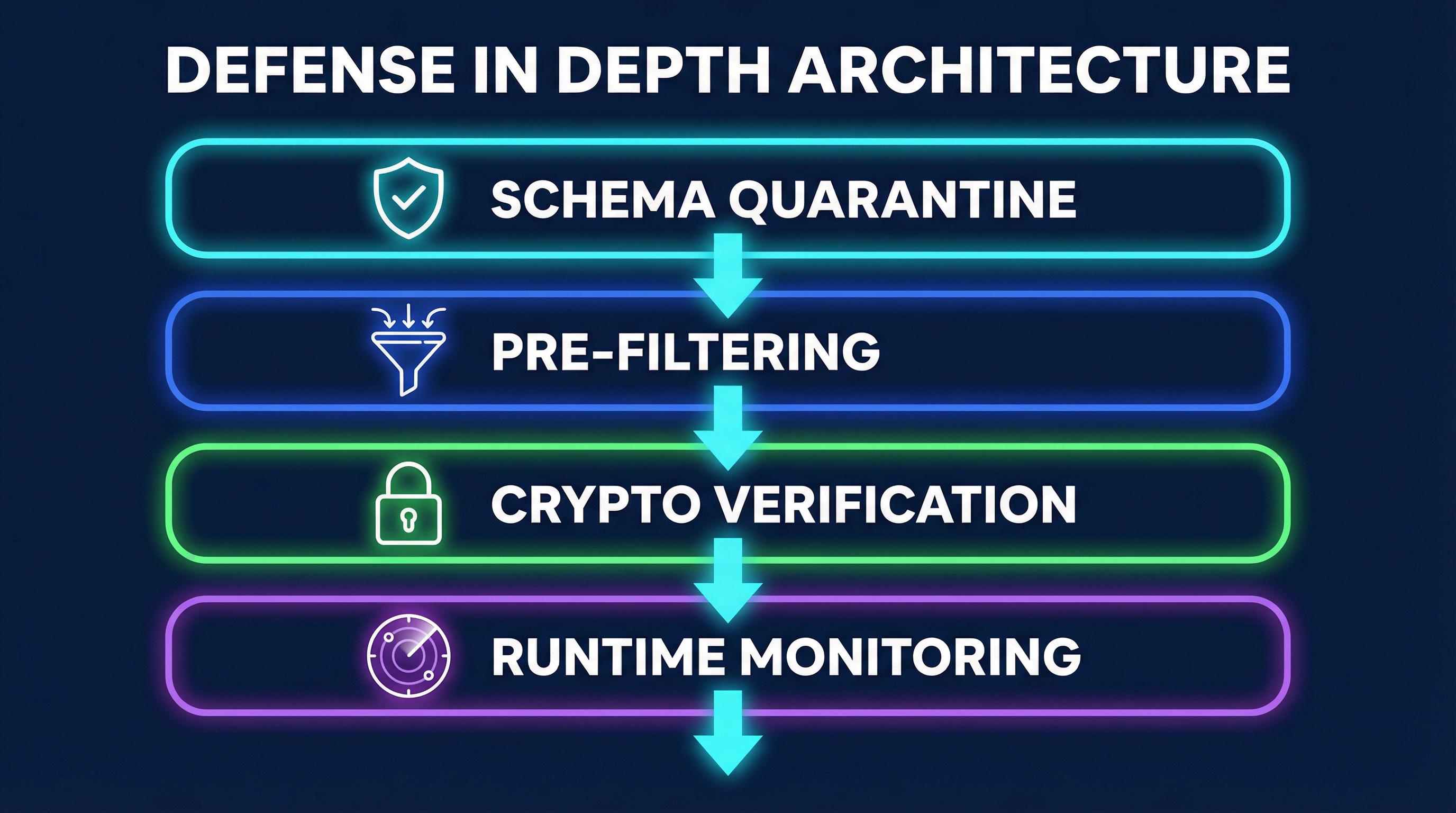

The ecosystem is responding with a range of defensive approaches. No single solution addresses all attack vectors, but the layered approach is maturing:

Schema Quarantine

The concept of schema quarantine treats new MCP server connections like untrusted code. Before a tool is made available to agents, its schema is analyzed for known attack patterns, tested in isolation, and compared against a baseline. Tools that exhibit suspicious characteristics — unusual permission requests, overly broad descriptions that could influence agent behavior, or schemas that differ from registry metadata — are quarantined for manual review.

Several tools implement this pattern. mcpproxy-go provides schema quarantine with BM25-based tool discovery, filtering tool sets before they reach the agent context. Sentrial takes a policy-based approach, allowing teams to define rules about which tool behaviors are acceptable.

Pre-Filtering and Context Management

The context explosion problem — where connecting to multiple MCP servers overwhelms the agent’s context window — has driven innovation in tool selection. Rather than presenting all available tools to the agent, pre-filtering systems use search algorithms to surface only relevant tools for a given task.

BM25 (Best Matching 25), a proven information retrieval algorithm, has emerged as a practical approach for this. It scores tool relevance based on the agent’s current task without requiring the LLM to process every tool definition. This keeps context windows manageable and reduces the attack surface by limiting which tool descriptions the agent sees.

Cryptographic Verification

SchemaPin introduces cryptographic signing for MCP tool schemas. Server developers sign their tool definitions, and agents can verify that the schemas they receive match what was originally published. This addresses schema drift directly — if a server’s tools change after signing, verification fails.

The approach is analogous to code signing in traditional software distribution. It does not prevent a malicious server from publishing harmful tools, but it does ensure that the tools an agent receives are the same ones that were reviewed and approved.

Runtime Behavioral Monitoring

Beyond static schema analysis, tools like Vet and Golf Scanner monitor MCP server behavior at runtime. They track network requests, file system access, and data flow patterns, alerting when a tool’s runtime behavior diverges from its declared capabilities.

This is particularly important for detecting schema drift attacks, where a tool might pass initial review but later modify its behavior through dynamic configuration or server-side updates.

Standards and Governance

The institutional response to MCP security is accelerating. NIST is currently evaluating MCP as a standard component in its framework for agentic AI identity governance. Their draft guidance, expected in mid-2026, addresses authentication between agents and tool providers, schema validation requirements, and audit logging standards.

The MCP specification itself has evolved. Recent updates to the protocol include provisions for capability negotiation, where servers and clients can declare their security capabilities and requirements. The OAuth 2.1 integration for MCP server authentication, introduced in the spec’s 2025 revision, is seeing broader adoption, though implementation quality varies.

MCP Dev Summit: April 2-3, NYC

The first dedicated MCP conference — the MCP Dev Summit — is scheduled for April 2-3, 2026 in New York City. The program includes tracks on security, registry governance, and enterprise deployment patterns. Notable sessions include presentations from the NIST team on their agentic AI framework, a panel on registry security with maintainers from mcp.run and Smithery, and workshops on implementing schema validation in production agent systems.

This is a significant milestone for the ecosystem. The fact that MCP has its own dedicated conference reflects both the protocol’s importance and the community’s recognition that the security challenges require coordinated attention.

What You Should Do Today

If you are building with MCP, here are concrete steps to improve your security posture:

Audit your MCP server connections. Know exactly which servers your agents connect to. Remove any that are not actively needed. Fewer connections means fewer attack vectors.

Use a proxy with filtering. Do not let agents connect directly to MCP servers. Route connections through a proxy that can enforce policies, quarantine new tools, and manage context size. Options include mcpproxy-go, Sentrial, and custom gateway implementations.

Validate tool schemas at runtime. Do not assume that the tool schema you reviewed during setup is the same one your agent receives next week. Implement schema pinning or hash-based verification.

Monitor for context explosion. If your agent’s token usage spikes unexpectedly, investigate. It may indicate a server returning inflated tool definitions — either through misconfiguration or as a deliberate attack.

Require authentication. Only connect to MCP servers that support authentication. The OAuth 2.1 integration in the MCP spec provides a standard mechanism. If a server does not support auth, treat it as a risk.

Stay current with the spec. The MCP specification is actively evolving. Security features added in recent revisions — capability negotiation, structured error handling, session management — address real attack vectors. Make sure your implementations track the latest spec.

Test your agent’s behavior with adversarial tool descriptions. Before deploying, test how your agent responds when given tool descriptions that contain injection attempts. If your agent blindly follows instructions embedded in tool descriptions, you have a tool poisoning vulnerability.

Looking Ahead

The MCP security landscape is at an inflection point. The protocol is too useful to abandon — it solves real problems in agent-to-tool communication — but the current security posture is inadequate for the scale of adoption we are seeing.

The good news is that the ecosystem is responding with real tools and standards, not just advisories. Schema quarantine, cryptographic verification, runtime monitoring, and intelligent pre-filtering represent a defense-in-depth approach that mirrors how the broader software industry learned to handle supply chain security.

The bad news is that adoption of these defenses lags far behind adoption of MCP itself. Most developers connecting MCP servers to their agents today are doing so without any of these protections in place.

The gap between attack capability and defensive deployment is the defining security challenge for the MCP ecosystem in 2026. Closing it requires both better tooling and better defaults — security should not be an opt-in afterthought for a protocol that gives external code access to AI agent capabilities.